Scenario-Based Analysis for Autonomous Driving Features

Enabling Scenario-Driven Safety Assessment Across the Development Lifecycle

Introduction: The Challenge of Demonstrating Safety in Autonomous Driving

Autonomous driving systems must demonstrate safe behavior across a vast range of operational situations. These systems operate in complex environments where road structure, traffic behavior, weather, and vehicle dynamics interact in ways that can produce millions of possible scenarios.

Traditional validation approaches based purely on public road testing are insufficient for demonstrating safety. The space of possible scenarios is simply too large, and the risk of encountering unsafe system behavior during early testing is too high.

This challenge has led the industry to adopt scenario-based safety assessment, where system behavior is evaluated against systematically defined driving scenarios across simulation, controlled testing, and public road validation.

Standards such as:

ISO 26262

ISO 21448

ISO 34501, 34502, 34503, 34504

provide guidance on safety engineering and scenario-based testing for automated vehicles. However, an important practical question remains: How do we connect safety analysis during development with scenario-based verification and validation?

This article proposes a structured approach that integrates safety analysis, behavioral requirements, and scenario-based testing across the safety lifecycle.

Scenario-Based Safety Assessment in Autonomous Driving Framework

Scenario-based validation is central to the evaluation of automated driving systems. In the context of ISO 21448, system safety must be evaluated against:

Known scenarios - Scenarios that have been identified during development

Unknown scenarios - Scenarios not explicitly identified but expected within the ODD

The system must demonstrate acceptable performance across both categories before release.

The challenge is that the combinatorial space of scenarios grows extremely quickly when parameterizing the operational design domain (ODD). Even with moderate parameterization, millions of scenarios can be generated. Because of this scale, systematic prioritization of scenarios becomes essential. The ISO 34501-34504 standard are a great source as an overall framework for scenario evaluation. ISO 34502 presents a scenario evaluation framework with requirements defining the what needs to be done, as well as mentioning ISO 26262 and ISO 21448 as possible sources of identifying safety-critical scenarios. In this article, I dive more in to the how scenario-based evaluation gets integrated into the safety lifecycle.

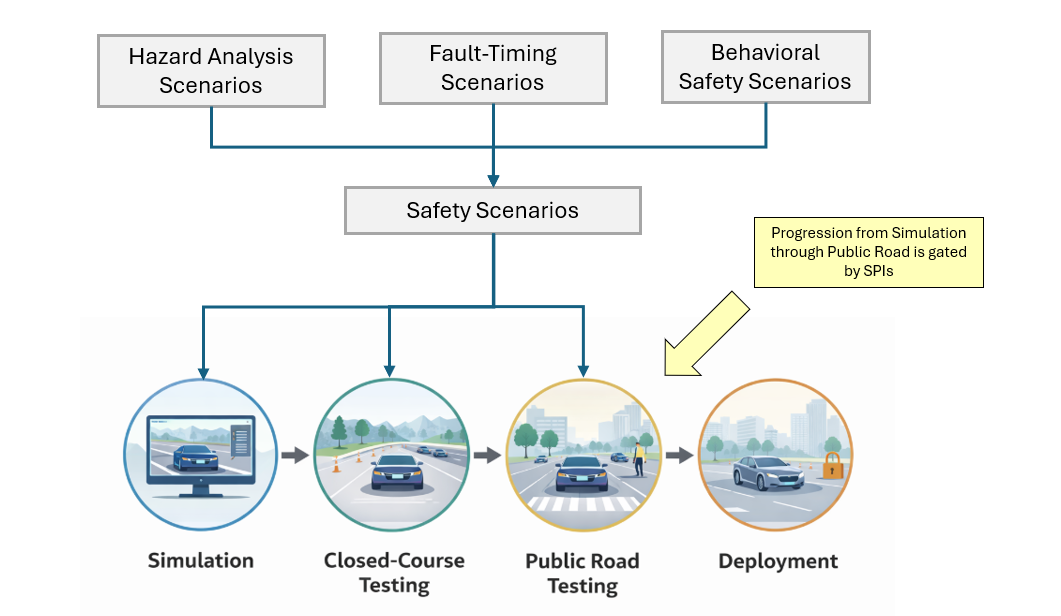

As seen in the image below, different activities like Hazard Analysis Scenarios, Fault Timing Analysis, and Behavioral Safety Analysis. These scenarios are then used for verification and validation from simulation, to closed-course testing, onto public road testing where validation occurs. The progression from each development stage, from simulation all the way to deployment, is gated by whether the scenarios meet their Safety Performance Indicators (SPIs).

Figure 1. Framework for scenario development

Sources of Safety-Critical Scenarios

A key insight in safety engineering is that the most important scenarios should not be generated randomly. Instead, they should originate from structured safety analyses conducted during system development. Three primary sources of scenarios can guide both development and validation.

Hazard Analysis Scenarios (HARA and HIRE)

Fault-Hnadling Scenarios (FTTI/FHTI)

Behavioral Performance Scenarios

1-Hazard Analysis Scenarios (HARA and HIRE)

The first source of scenarios originates from safety analyses performed under:

ISO 26262 Hazard Analysis and Risk Assessment (HARA)

ISO 21448 Hazard Identification and Risk Evaluation (HIRE)

These analyses evaluate hazardous situations without mitigations. This evaluation determines the risk associated with scenarios across the ODD and leads to safety goals determination with ASIL rating (For Functional Safety). For example, the safety goal ”The vehicle shall prevent unintended lateral acceleration (ASIL D)” was derived from at least one scenario in the HARA. The same scenario from which the safety goal is derived can be used for scenario-based verification and validation. The scenarios used to derive safety goals become critical validation scenarios later in the lifecycle.

2-Fault-Handling Scenarios (FDTI / FHTI)

A second category of scenarios is used to determine timing requirements for safety mechanisms. These scenarios are analyzed to determine the Fault Tolerant Time Interval (FTTI) and Fault Handling Time Interval (FHTI). The goal is to determine: How quickly must the system detect and mitigate a fault before the hazard becomes unavoidable? For example, if the self driving vehicle driving in a curve at highway speed and the steering control becomes unavailable:

How long before the vehicle departs the lane?

How quickly must the fault be detected?

How quickly must the mitigation be applied?

These scenarios should be consistent with the scenarios during the HARA/HIRE, and can be referenced during verification and validation scenarios later on.

3-Behavioral Performance Scenarios

A third category focuses on defining safe behavioral requirements. These scenarios are not necessarily driven by risk analysis but by performance limits within the ODD. Safety Performance Indicators (SPIs) can be used to derive the safe behaviors. Different scenarios will need different vehicle capabilities, and therefore, different SPIs shall apply to derive different requirements like functional, performance, and constraint requirements. For example, the vehicle executing a high-speed curve will need to meet SPIs for maximum lateral acceleration, maximum jerk, and maximum deviation from the center of the driving lane. Whereas, a stop-and-go scenario will need to meet SPIs for time to collision (TTC), minimum distance gap, and maximum longitudinal deceleration. The resulting requirements will answer the questions:

How far ahead does the vehicle need to detect and react to other vehicles?

What are the vehicle constraints to drive safely in different scenarios?

What is the required performance of the system?

The scenarios that were used to derive these behavioral requirements can and should be used to verify and validate that the safe behaviors have been met by the self driving vehicle.

Scenario Evaluation Across Testing Environments

Given that the number of possible scenarios is extremely large, the only realistic way to verify and validate these scenarios is by heavily leveraging simulation before any sort of vehicle testing efforts. Simulation is an effective way to gauge a prompt assessment of system performance without the expensive and time consuming efforts in vehicle testing. However, the challenges between simulation to real world performance very much exist. Therefore, the need to have a systematic way to progress from simulation to vehicle testing can be bucketed into 3 main methods: simulation, closed-course, and public road testing

Simulation enables evaluation of large scenario spaces with the advantages of millions of parameterized scenarios in a controlled and safe environment for testing the safety boundaries of the system. Simulation is used to verify that the system meets SPIs before moving to physical testing.

Closed-course testing allows controlled reproduction of critical scenarios, such as object avoidance capabilities. The goal is to confirm system performance in real vehicle conditions before performing public road testing.

Public road testing focuses on validating nominal functionality, evaluating system robustness, and collecting real-world scenario data. By the time a system reaches this stage, most safety-critical scenarios should already have been evaluated in simulation and closed-course testing.

Scenario Prioritization

Scenarios can be prioritized based on the risk associated with safety critical scenarios from HARA and HIRE. For example, the highest priority scenarios can be those with the highest ASIL across safety goals with the ones with the highest Exposure rating being the top priority. The risk-based prioritization ensures that testing resources are allocated to the most safety-critical conditions first, especially for closed-course and pubic road testing where test execution is significantly more costly and time consuming than in simulation.

Integrating Functional Safety and SOTIF

A key benefit of this approach is the integration of leading industry safety standards such as ISO 26262 and ISO 21448. Both, functional safety and SOTIF, provides guidance on verification and validation activities such as vehicle level fault injection (functional safety) and input triggering condition (SOTIF). Other standards such as ISO 8800 can be integrated for the validation of Machine Learning components at the vehicle level as well.

Conclusion

Scenario-based safety assessment provides a scalable method for evaluating autonomous driving systems. By linking safety analysis, behavioral requirements, and testing scenarios, engineers can create a structured validation framework that connects development activities with verification and validation.

The approach described in this article emphasizes:

systematic scenario identification

risk-based prioritization

progressive validation from simulation to real-world testing

integration of functional safety and SOTIF.

This methodology enables a more structured path toward demonstrating that autonomous driving systems achieve acceptable safety performance across both known and unknown scenarios.