Application of ISO 8800, ISO 26262, and ISO 21448 for ML-Based Systems

A practical approach on how leading safety standards can be applied for ML-systems in road vehicles

Introduction

In a previous article, I discussed how behavioral safety analysis and scenario-based engineering can be used to guide the development of safe machine learning (ML) models for road vehicles. Building on that foundation, this article presents a broader framework describing how industry safety standards can be applied in practice when developing ML-based vehicle systems.

A key distinction must be made when discussing the safety of embedded systems that deploy ML models in road vehicles. Unlike traditional software systems, ML-based architectures introduce additional layers of complexity in how functionality is implemented, validated, and ultimately deployed in the vehicle. In theory, fully end-to-end ML systems could be used to perform complex driving tasks. In practice, however, the verification and validation challenges associated with such systems remain extremely difficult, particularly for safety-critical applications such as automated driving.

As a result, the industry is increasingly adopting modular architectures for ML-enabled systems. However, even the concept of “modular” requires careful interpretation. In real vehicle platforms, ML-based functionality rarely exists in isolation. Instead, systems are typically composed of a combination of deterministic software components and learned, non-deterministic models working together within a larger software architecture.

This architectural reality has an important implication for safety engineering. Despite the introduction of ML components, traditional functional safety principles remain highly relevant. Deterministic components responsible for system monitoring, safety mechanisms, and control logic still play a critical role in ensuring safe system behavior.

For this reason, the development of ML-based vehicle systems requires the combined application of multiple safety standards.

General Approach

The safety of ML-based vehicle systems requires a development framework that integrates multiple safety standards. In practice, the standards most relevant for automated driving systems are ISO 26262 (Functional Safety), ISO 21448 (SOTIF), and ISO/PAS 8800 (AI safety for road vehicles). Each of these standards addresses different categories of risk, but they ultimately converge at the system level where the vehicle must operate safely regardless of the underlying cause of hazardous behavior.

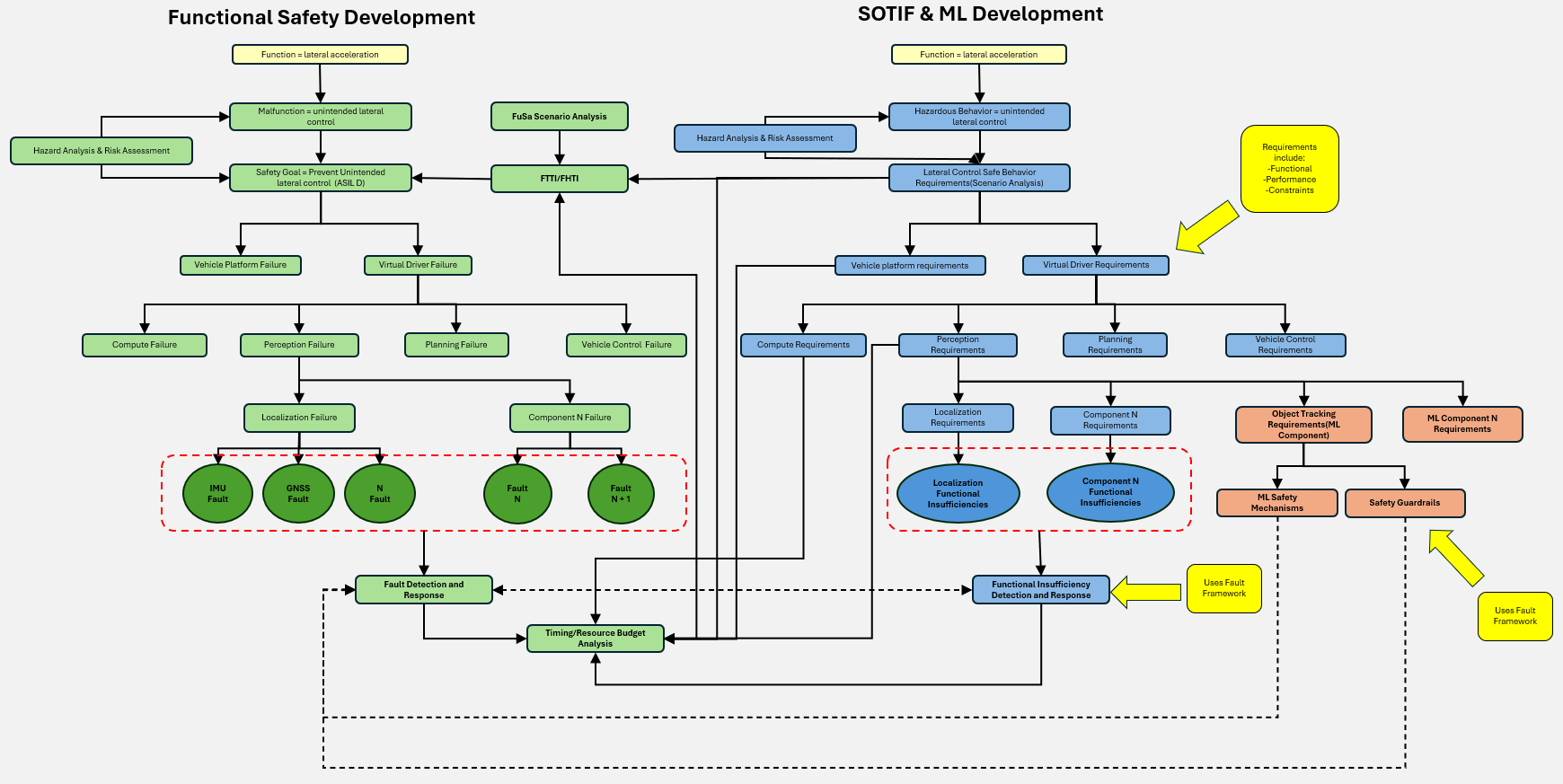

Figure 1 illustrates a practical architecture for applying these standards together when developing ML-enabled systems for road vehicles.

Figure 1. Functional Safety, SOTIF, and AI Safety Approach

Vehicle Level Hazard Analysis & Risk Assessment

At the vehicle level, unsafe behavior often appears identical regardless of its source. For example, excessive lateral acceleration could be caused by a control system malfunction, degraded perception input, an algorithm design limitation, or an incorrect output produced by a machine learning model. Because of this, the safety development process begins by analyzing vehicle behaviors and identifying potential hazards across the system architecture. In practice, this means performing both Hazard Analysis and Risk Assessment (HARA) from the functional safety perspective and Hazard Identification and Risk Evaluation (HIRE) from the SOTIF perspective.

An important practical consideration is that these two analyses should ideally be based on the same set of operational scenarios and hazardous behaviors. This alignment is necessary because SOTIF HIRE uses the severity and controllability ratings defined in the HARA process. As a result, maintaining a consistent set of scenarios across both analyses ensures that safety risks are evaluated using a coherent framework.

Although the analyses are performed from different perspectives, they should be carried out in parallel and compared early in the development process. Doing so helps identify any inconsistencies in the interpretation of hazards or scenarios and prevents divergence between functional safety and SOTIF development activities. Aligning the scenario set and hazard definitions early also avoids significant rework later in the development lifecycle and ensures that safety requirements remain consistent across the system architecture.

At this stage of development, the analysis focuses on hazardous vehicle behaviors, without yet determining whether the underlying cause is a fault, a functional insufficiency, or an AI-related error. Once hazards are identified and safety goals are defined, more detailed system-level and component-level analyses can be performed to determine the potential sources of these hazards.

Distinguishing Faults, Functional Insufficiencies, and AI Errors

Once hazards have been identified at the vehicle level, the next step is to analyze the system architecture to determine the potential sources of those hazards. In ML-enabled vehicle systems, these sources typically fall into three categories: faults, functional insufficiencies, and AI errors. While these categories are conceptually distinct, they often produce similar hazardous behaviors at the vehicle level.

Faults are addressed primarily through ISO 26262 functional safety processes. These include random hardware failures and systematic software faults that cause system components to deviate from their intended behavior. Typical examples include compute failures, communication errors, sensor hardware faults, or deterministic software defects. Functional safety development focuses on identifying these faults, detecting them during operation, and ensuring the system can transition to a safe state when they occur.

Functional insufficiencies, addressed through ISO 21448 (SOTIF), arise when the system behaves according to its design but the design itself is insufficient for certain operating conditions. These situations often occur due to environmental limitations, sensor performance constraints, or algorithmic limitations. For example, a perception algorithm may fail to detect a pedestrian under rare lighting conditions, even though the software operates exactly as designed.

Machine learning systems introduce an additional category of risk related to AI errors, which are addressed by ISO/PAS 8800. These errors can arise from dataset limitations, distribution shifts, model generalization limits, or unstable predictions. Because ML models rely on statistical learning rather than deterministic logic, their behavior cannot always be fully predicted or verified using traditional methods.

In practice, the boundaries between these categories are not always clear. A hazardous vehicle behavior may result from a combination of these factors. For example, degraded sensor input may trigger an ML model to produce an incorrect prediction, which then propagates through the system architecture. For this reason, safety engineering must consider all three categories simultaneously rather than treating them as independent domains.

Runtime Safety Architecture and Fault Management

One of the most effective ways to manage safety risks in ML-enabled systems is to build upon the runtime fault management architecture required by ISO 26262. Functional safety architectures already include mechanisms designed to detect faults, evaluate their safety impact, and trigger appropriate mitigation strategies. These mechanisms provide a strong foundation for addressing functional insufficiencies and AI-related risks as well.

Typical functional safety runtime frameworks include capabilities for:

monitoring system health

detecting faults in hardware and software components

evaluating whether faults affect safety goals

transitioning the vehicle to a safe state or degraded mode

Examples of mitigation strategies include switching to redundant components, limiting system functionality, or initiating a minimal risk maneuver (MRM).

In practice, these same mechanisms can also detect conditions that indicate potential functional insufficiencies or AI errors. For example, degraded sensor inputs, abnormal perception outputs, or inconsistent object tracking results may indicate that the system is operating outside its intended performance envelope. Detecting these conditions allows the system to apply mitigation strategies similar to those used for hardware faults.

By extending the functional safety runtime framework to include detection and response mechanisms for insufficiencies and AI errors, engineers can create a unified safety architecture that handles all three categories of risk.

ML Safety Guardrails and Analytical Safety Mechanisms

Machine learning components are often treated as black-box systems because their internal behavior cannot always be interpreted in deterministic terms. As a result, safety must be enforced through external monitoring mechanisms and guardrails that evaluate the plausibility and safety of ML outputs.These mechanisms generally fall into two categories: AI error detection mechanisms and safety guardrails.

AI error detection mechanisms monitor inputs or outputs to identify conditions where ML predictions may be unreliable. Examples include detecting degraded sensor inputs such as glare, occlusion, or sensor noise. Monitoring prediction confidence levels or identifying distribution shifts in sensor data are also common approaches.

Safety guardrails go one step further by actively constraining system behavior to ensure it remains within physically or logically plausible limits. These guardrails are typically implemented using deterministic algorithms and operate independently of the ML models themselves. Examples include:

Trajectory feasibility checks - Generated trajectory must obey vehicle dynamics

Object tracking plausibility - Objects cannot move unrealistically between frames

Map consistency checks - Detected objects must align with drivable areas

Because these mechanisms enforce safety constraints around ML outputs, they often function as safety mechanisms within the functional safety architecture. As such, they may inherit the ASIL level associated with the safety goals they protect and must therefore be developed according to functional safety processes. This approach allows ML models to be integrated into safety-critical systems while maintaining deterministic safeguards around their behavior.

Safety Analysis Techniques (FMEA, FTA, CTA, STPA)

Several safety analysis techniques are used to identify faults, functional insufficiencies, and AI-related risks throughout system development. Each technique provides a different perspective on how hazardous conditions may arise.

Functional safety development typically relies on methods such as Failure Mode and Effects Analysis (FMEA) and Fault Tree Analysis (FTA).FMEA focuses on analyzing potential failure modes at the component level and evaluating their effects on system behavior. It is particularly useful for identifying how faults in hardware or software components may propagate through the system architecture. FTA takes a top-down approach by analyzing how combinations of faults can lead to system-level hazards. This method helps engineers understand fault propagation paths and identify critical failure combinations.

For SOTIF and ML safety analysis, scenario-based approaches are particularly important. One method that has proven useful in practice is Systems-Theoretic Process Analysis (STPA). Unlike traditional failure-based analyses, STPA focuses on identifying unsafe control actions that could lead to hazardous system behavior. These unsafe control actions may arise from multiple sources, including hardware or software faults, functional insufficiencies in system design, or incorrect outputs from ML components (including reasons why ML components may provide incorrect outputs, like insufficiencies during model training). Because STPA focuses on system interactions rather than individual component failures, it can be applied effectively across functional safety, SOTIF, and AI safety analyses.

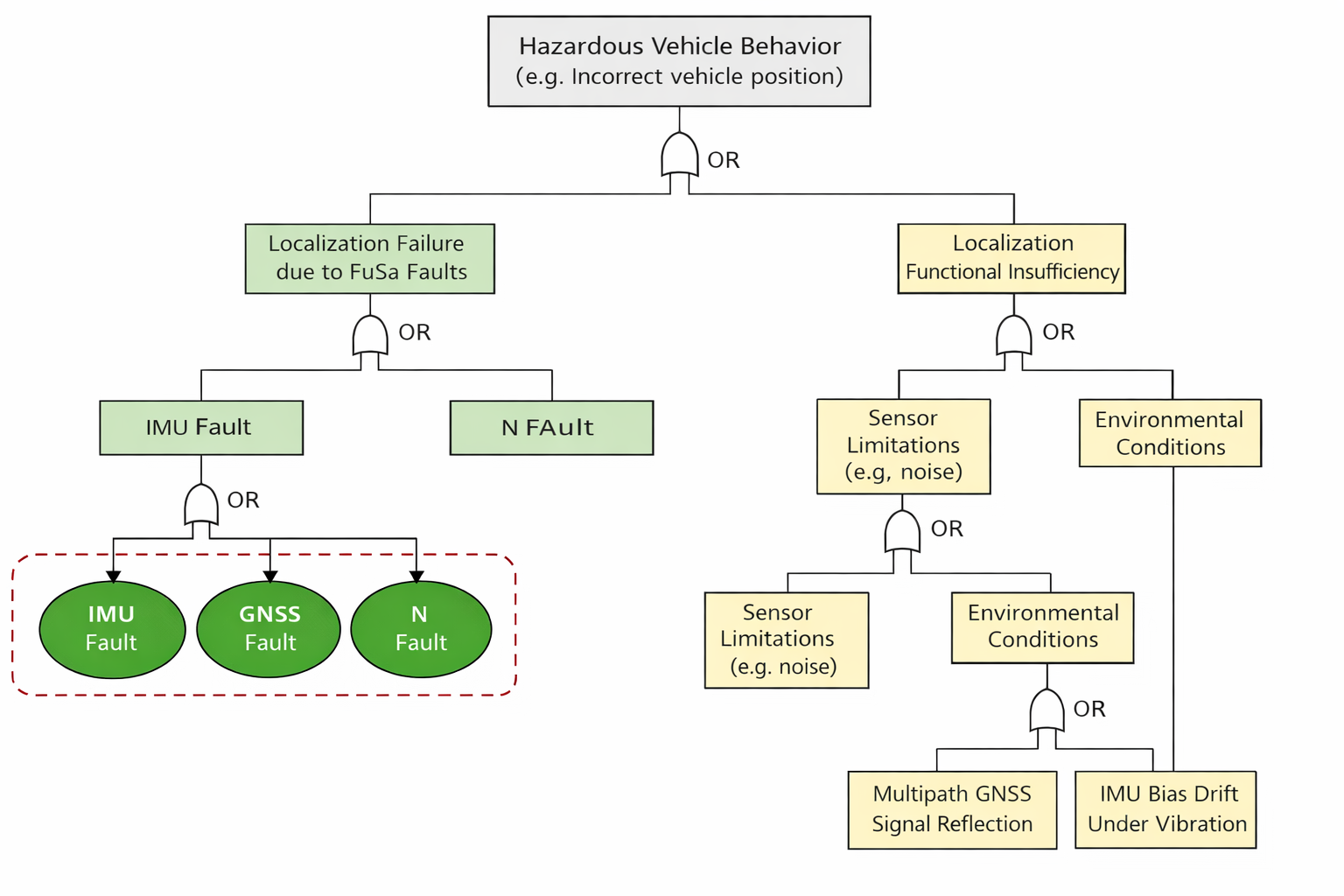

From the SOTIF perspective, additional techniques are often used to analyze functional insufficiencies and their triggering conditions. One such method is Cause Tree Analysis (CTA), which is conceptually similar to Fault Tree Analysis but focuses on identifying causal factors that can lead to hazardous behavior even when the system operates according to its intended design. CTA helps trace how perception limitations, environmental conditions, or algorithmic interpretation errors may propagate through the system and ultimately produce unsafe vehicle behavior.

In practice, CTA and FTA can be integrated into a single analytical structure. Both approaches begin with a hazardous system-level event and decompose the potential causes leading to that event. While traditional FTA branches focus on hardware and software faults addressed through functional safety, CTA branches can represent functional insufficiencies, environmental triggering conditions, or AI-related performance limitations addressed through SOTIF and AI safety analyses. Consolidating these analyses into a single tree provides a unified view of how hazardous behavior may arise across the system and avoids maintaining separate artifacts for faults and insufficiencies. This integrated approach also improves traceability between vehicle-level hazards and the different types of system-level causes that must be mitigated through the safety architecture.

ISO 21448 also describes an FMEA-style analysis for functional insufficiencies, where the relationship between triggering conditions and system limitations is analyzed. This analysis can be performed in two directions: identifying the triggering conditions that may lead to a functional insufficiency, and identifying the operational scenarios in which a known functional limitation may become safety relevant. These analyses help identify conditions within the Operational Design Domain (ODD) where system performance may be insufficient and guide the development of mitigation strategies or safety mechanisms.

Together, these techniques provide complementary perspectives for analyzing safety risks across faults, functional insufficiencies, and AI-related errors within ML-enabled vehicle systems.

Figure 2. FTA and CTA unified example

Interaction Between Safety Requirements

In practice, safety requirements derived from functional safety, SOTIF, and ML safety analyses often influence one another. These interactions occur throughout the system development lifecycle and must be carefully managed to ensure consistency across the system architecture.

For example, SOTIF analysis may identify performance constraints related to perception algorithms or sensor limitations. These constraints may impose latency or accuracy requirements that influence the system's functional safety timing budgets.

Similarly, ML models may require specific computational resources or processing times to produce reliable outputs. These requirements must remain consistent with functional safety parameters such as Fault Tolerant Time Interval (FTTI) and system response times.

If these constraints are not aligned, inconsistencies may arise between the system architecture and the safety requirements derived from different analyses. Resolving these inconsistencies later in development can lead to significant redesign efforts.

For this reason, safety engineers must ensure that requirements derived from functional safety, SOTIF, and ML safety analyses are developed in coordination rather than in isolation.

Conclusion

The development of ML-enabled vehicle systems requires a comprehensive safety approach that integrates ISO 26262, ISO 21448, and ISO/PAS 8800. While these standards address different categories of risk, their practical application reveals significant overlap across the system architecture.

Hazards must first be analyzed at the vehicle behavior level before determining whether they originate from faults, functional insufficiencies, or AI errors. From there, engineers can use a combination of functional safety mechanisms, scenario-based analysis, and ML safety guardrails to mitigate potential risks.

Extending the functional safety runtime architecture to detect and respond to functional insufficiencies and AI errors provides a practical framework for managing safety across these domains. Deterministic safety mechanisms surrounding ML components play a critical role in this architecture, enabling ML models to be integrated into safety-critical systems while maintaining predictable system behavior.

Ultimately, achieving safe ML-enabled automated driving systems requires a holistic safety engineering process in which functional safety, SOTIF, and AI safety are developed together rather than independently. Engineers who understand how these standards interact at both the system and component levels are better positioned to design robust architectures capable of handling the complex challenges introduced by machine learning in safety-critical automotive systems.